AI and the Future of Work: Policy Lessons from Acemoglu and Restrepo

Artificial intelligence—especially large language models and the systems built on them—sits at the center of public debate about work and wages. Techno-optimists expect a productivity surge so large that living standards rise broadly and leisure expands. Pessimists foresee widespread displacement, weaker wage growth, heavier demands on the remaining workforce, and a sharper concentration of income and power.

This dichotomy is not only reductive; it mislocates causality. The economic impact of AI (or any emerging technology) is not determined by the technology in the abstract, but by the direction of adoption: which tasks are automated, which new tasks are created, and how incentives and institutions shape whether AI complements workers or substitutes for them.1 In the task-based framework developed by Daron Acemoglu and Pascual Restrepo, AI is not destiny, but a set of choices that are constrained by costs, organizational design, and policy. Institutions, and even innovations themselves, can expand the set of tasks in which workers remain productive and valued. Or they can restrict this set of tasks, potentially accelerating the very job loss they mean to prevent.

The policy stakes follow directly from this task-based view of technology. Regulation can do more than slow innovation or adoption at the margin: it can shape the direction of innovation by nudging firms toward narrow, compliance-safe automation that substitutes for workers, and away from platform-style deployments that reorganize work, raise output, and create new human-complementary tasks. In that world, “protective” rules can perversely amplify job loss while also preventing the diffusion of the very productivity gains that would otherwise lift wages and living standards.

Across a series of papers, Acemoglu and Restrepo’s task-based approach offers lawmakers a disciplined way to think about “getting AI policy right.” The core objective is not to pick winners among technologies, but to align incentives so that AI adoption expands the set of tasks in which workers remain productive by supporting broad-based growth rather than a one-sided shift toward labor-replacing automation. Their approach is also a warning: if policy or practice confines AI to familiar, narrowly defined tasks, the creation of new ones may slow, in which case job loss increases. That is, policies that restrict AI in the name of protecting jobs may, in practice, hinder new job creation and thus speed displacement rather than prevent it.

Displacement and Productivity: A Tensive Couple

Standard economic theory often describes technological progress in factor-augmenting terms: technology makes labor, capital, or both more productive. In a standard production function, output is produced with labor and capital, and technology raises the effectiveness of each: better tools for workers, better machines for firms. When economists model automation, then, they often treat it as a form of capital-augmenting progress: machines become more effective, so a given amount of capital produces more. This framing leaves implicit, however, that the set of tasks in production is taken as largely fixed. Technology changes efficiency, not the boundary between what workers and machines do.

Acemoglu and Restrepo (2018) argue that the standard, factor-augmenting view misses what is economically distinctive about automation: namely, that it changes who performs which tasks.2 It allows machines, software, or algorithmic systems (capital) to perform specific tasks that previously required workers, thereby reallocating tasks from labor to capital. In their task-based approach, automation is best understood as an expansion in the set of tasks that can be produced using capital, which directly reshapes labor demand rather than merely raising the productivity of an input.

When a task previously performed by workers can be carried out by machines or software at comparable (or higher) quality and at lower effective cost, firms have a strong incentive to reallocate that task away from labor. Acemoglu and Restrepo call the resulting decline in task-specific labor demand the displacement effect: automation reduces the demand for workers in the activities that become machine-performable. This can occur even when the near-term productivity gains are modest because substitution in a particular task does not require a one-for-one increase in aggregate efficiency.

Labor-market anxiety over AI comes from the displacement effect. If AI systems perform an expanding set of complex tasks with limited human input, some job losses in affected occupations are a plausible outcome. But Acemoglu and Restrepo emphasize that displacement is only one side of the ledger. A set of countervailing forces can offset or even dominate displacement. Which forces prevail depends on how AI is deployed (shaped by incentives, regulation, and organizational design) and on how quickly markets and workers adjust.

A central countervailing force is the productivity (or scale) effect. When automation lowers unit costs or raises efficiency, firms can expand output: producing more, serving more customers, or offering higher-quality goods at lower prices. That expansion tends to increase overall economic activity and can raise labor demand even in sectors adopting automation since many tasks remain complementary to the technology. Moreover, the gains need not stay contained within the automating sector: lower prices raise real incomes, which shifts consumer spending toward other goods and services and boosts labor demand elsewhere in the economy.

Two examples solidify the mechanism. Take the ATM: cash machines automated routine transactions, but lower operating costs and a shift toward relationship banking helped many institutions expand their footprint and range of services, with tellers reallocating toward customer-facing, advisory, and sales-oriented tasks rather than disappearing one-for-one. A second example is mechanized agriculture. As productivity rose and food prices fell, households devoted a smaller share of income to basic necessities, freeing demand for manufactured goods and services. This is a classic channel through which productivity gains in one sector translate into employment growth in others.

Alongside productivity, Acemoglu and Restrepo note two additional offsets to displacement: capital accumulation and “deepening” automation.

Capital accumulation is the idea that as firms invest and capital stock grows, many tasks still require people who operate and maintain equipment, coordinate processes, manage quality, sell, and improve production. When investment expands the scale of production, demand rises for these complementary, human-intensive tasks. In that sense, capital and labor complement one another in the aggregate: more capital can raise the marginal value of labor in certain tasks around machines.

“Deepening” automation captures a different margin. Once a set of tasks has already been automated, more improvements to the automated system can raise efficiency and output without substituting for additional workers since most of the direct “replacement” has already occurred. After a production line has been automated, incremental advances are often less about headcount reduction and more about higher throughput, fewer errors, lower unit costs, and higher quality. Those gains can still support employment and wage growth indirectly by expanding output and raising real incomes without triggering another round of direct displacement.

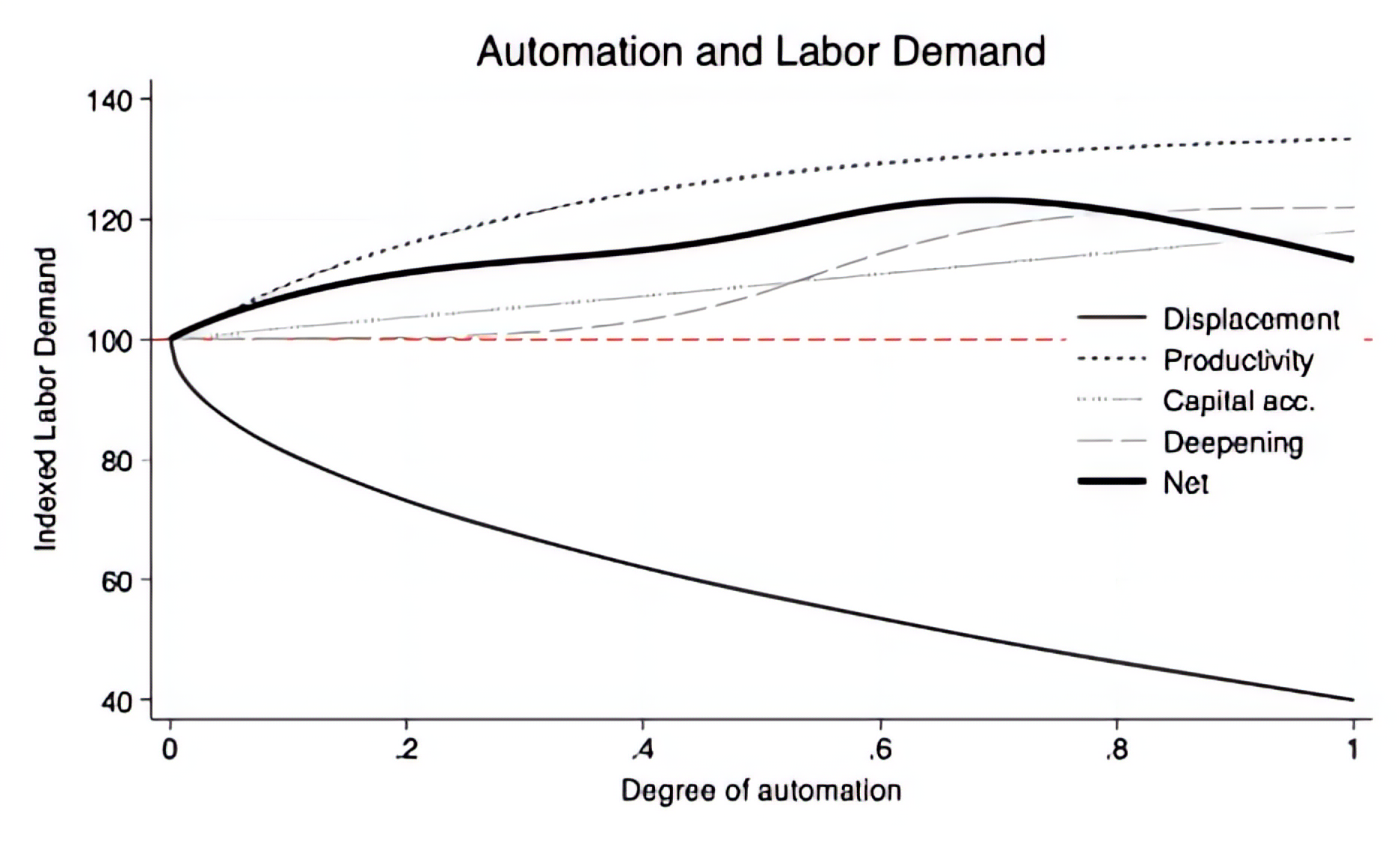

The figure displays the logic of Acemoglu and Restrepo’s model in a stylized setting. Start from a baseline in which the firm employs 100 workers. The horizontal baseline marks that initial labor-demand level in red. The x-axis measures the degree of automation in the production process, so 0.2 corresponds to 20 percent of relevant tasks being performed by machine. The downward-sloping displacement curve shows the direct substitution of capital for labor as automation expands. In this example, displacement is steepest early on when the first tranche of tasks become automatable and then tapers as the remaining tasks are harder to substitute.

The other lines depict offsets. The productivity and scale curve rises as automation lowers unit costs and expands output, which increases labor demand in the tasks that remain complementary to production. The capital-accumulation curve rises more gradually as investment growth expands scale and raises demand for human-intensive tasks, such as operations, maintenance, coordination, and sales. Finally, the deepening-automation curve increases later when improvements to already-automated systems raise throughput and quality without requiring additional rounds of direct labor replacement. Together, these offsets can keep net labor demand from falling as sharply as the displacement effect alone would imply. In this simulation, they allow net labor demand to remain above the baseline across automation intensities.

The above simulation is for only one firm. While countervailing forces mitigate displacement, they do not guarantee that automation is neutral or beneficial for workers in the aggregate. The reason is distributional. Automation tends to reallocate tasks toward capital. Even when productivity rises, wages need not keep pace if the marginal product of labor grows more slowly than the returns to capital, or if bargaining and market structure allow a larger share of the gains to accrue to capital owners. Cheaper capital relative to labor, or at least as good performance, incentivizes firms to substitute away from workers, and so puts downward pressure on labor’s share of income. At the same time, a complete accounting is general equilibrium, an account across firms: job creation can occur outside the automating firm through lower prices, higher real incomes, new complementary tasks, and even new firm entry, which are channels the single-firm illustration necessarily abstracts from.3

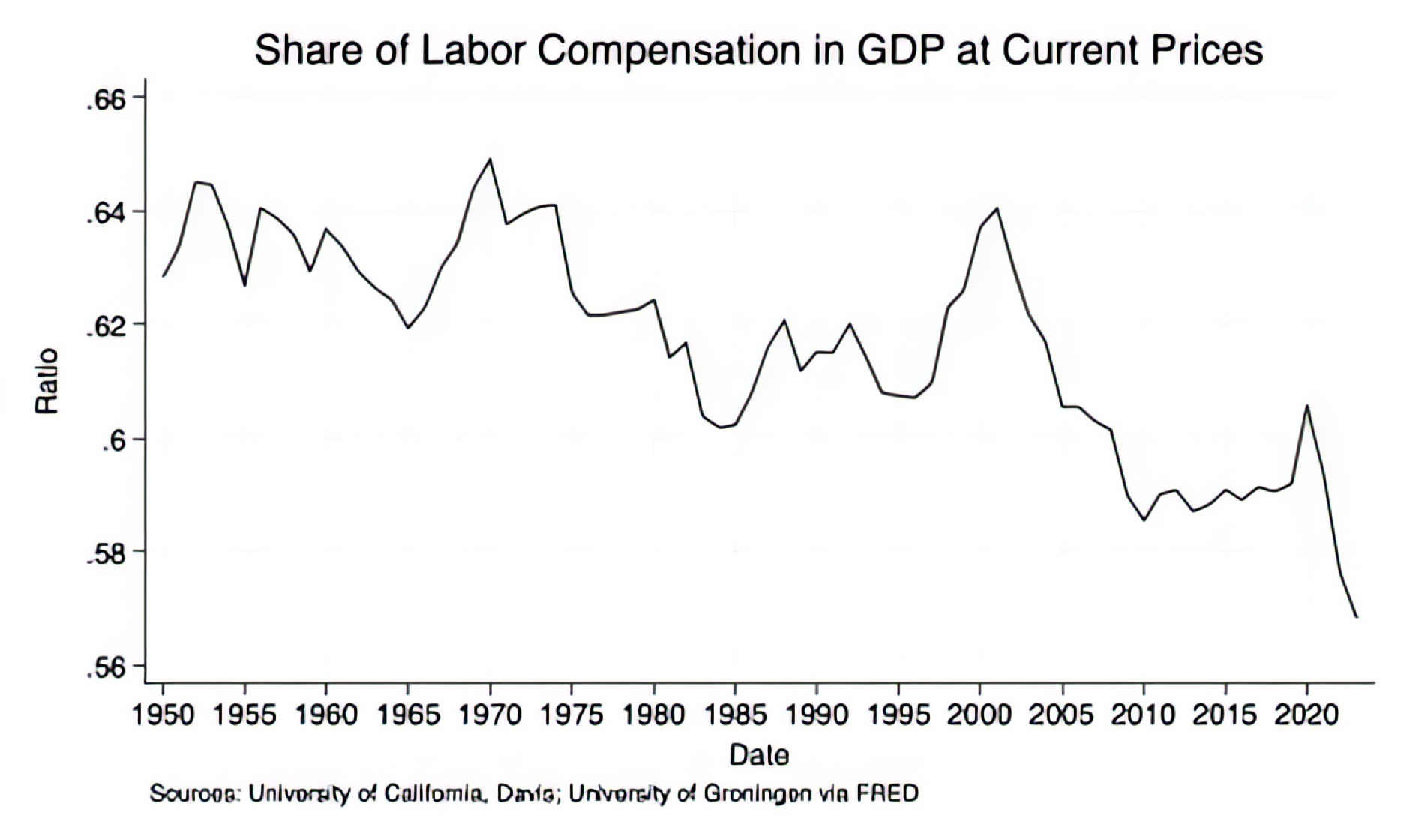

Despite the logic above, the long-run history of automation presents a puzzle. If automation mechanically and monotonically pushed the economy toward lower labor demand and a steadily shrinking labor share, we would expect labor’s share of income to collapse over time. That has not happened.4 The figure below plots the share of labor compensation in GDP at current national prices.5 From 1950 through much of the postwar era, the series is relatively stable, even as the U.S. economy undergoes major structure change. Beginning in the later decades of the twentieth century, the series shows a clearer downward drift, but is hardly a smooth, one-way slide. There are pronounced swings, declines, partial recoveries, and plateaus, not mechanical erosion. The point is that displacement alone does not describe the data well. Explaining the observed path likely requires a combination of mechanisms, and pinning down their relative importance is an empirical task. The figure alone cannot settle this. Still, Acemoglu and Restrepo argue that many standard accounts miss a central channel in the long-run adjustment to automation: the creation of new tasks in which labor retains a competitive advantage.

The Reinstatement Effect & “So-so” Automation

On a modern assembly line, industrial robots can take over physically demanding and highly repetitive tasks, such as welding, joining, lifting, and precision placement. But the diffusion of robotics also expands complementary work: system design and integration, programming and calibration, monitoring and quality control, maintenance and repair, and process optimization. Acemoglu and Restrepo call this the reinstatement effect: technological change can create new tasks (within and outside a firm) in which humans retain a comparative advantage. By expanding the set of tasks in which labor is productive and valued, reinstatement helps offset displacement. The crucial point is that this task creation is not automatic; it depends on the direction of innovation and the incentives shaping deployment.

Acemoglu and Restrepo also offer a grounded reason for optimism about AI’s reinstatement potential. They argue that AI is often better understood as a general-purpose platform, rather than only “a narrow set of technologies with specific, pre-determined applications.”6 Platforms differ from standalone applications as they can be recombined with existing workflows, data, and tools, and enable new tasks and complementarities rather than only automating old ones. A search-and-retrieval model, for example, can reduce time spent gathering information. But once embedded in an analyst’s workflow, it can also enable higher-value work: rapid sensitivity analysis, scenario construction, data cleaning and reconciliation, hypothesis generation and testing, and translating qualitative evidence into quantitative inputs. In this way, AI can simultaneously automate portions of existing jobs while expanding the frontier of what workers can productively do.

Like earlier waves of automation, the current wave of AI can drift into what Acemoglu and Restrepo call “so-so automation,” where machines substitute for workers in tasks with modest productivity gains and where few new, labor-complementary tasks emerge at scale. Contrast a narrowly improved tool with a more general-purpose platform.7 A typewriter, relative to handwriting, made the task of producing legible text faster and cheaper, but did not, by itself, open a large frontier of new complementary tasks. The computer, on the other hand, became a platform that could be recombined across domains and enabled entirely new categories of activity and occupation, from software development and systems administration to digital design, online publishing, and cybersecurity. The point is not that one device replaced one job, and the other did not; it is that platforms generate reinstatement by creating and scaling new tasks, whereas narrow tools mainly deliver substitution in a limited set of activities. If computing were confined to word processing, the task-creation channel would have been far weaker.

Acemoglu and Restrepo worry that a meaningful share of real-world AI deployment could drift into so-so automation. In many business settings, applications like speech and image recognition may represent only incremental improvements over what humans already do well, which can translate into limited economy-wide gains, especially once supervision, error handling, and the costs of integrating systems into real workflows are taken into account. Similarly, replacing call-center agents with synthetic voices can reduce headcount without an obvious pathway to new tasks at scale for displaced workers. Without complementary redesign that leads to new products, new services, and reengineered workflows that expand output and create genuinely human-intensive activities, these deployments are less likely to deliver a productivity revolution and more likely to amount to labor substitution with limited spillovers into job creation.

Some of the most promising uses of AI today align with reinstating technological change, that is, applications that expand what workers can do rather than merely substituting for them. In practice, this is where agentic systems matter. An agent, or coordinated set of agents, can execute a multi-step workflow with limited supervision: retrieving information, calling tools, producing intermediate outputs, and iterating toward a defined goal. While certain tasks are automated, performance remains shaped by human direction as workers specify objectives, set constraints, and review and correct outputs.

This is the platform role Acemoglu and Restrepo emphasize. AI is not confined to a single predetermined application; it can be recombined with human judgment, domain knowledge, and organizational processes to open up new tasks and workflows. The empirical question is whether these capabilities scale into durable task creation rather than stopping at narrow substitution. And this question remains unanswered.

Relevance for AI Policy

OpenAI’s recent push to help enterprises build, deploy, and manage fleets of agents and Anthropic’s parallel push toward enterprise agent workflows fits with the idea of AI as a platform instead of a single-purpose application.8 But the direction of deployment is not predetermined. Policy can either reinforce this platform trajectory by lowering the compliance and adoption frictions for worker-complementary uses or can steer firms toward narrower, compliance-safe substitutions. Laws and regulations change not only how fast AI diffuses, but which kinds of AI get built. Acemoglu and Restrepo’s model implies that laws and regulations meant to protect workers can harm them by steering firms toward “so-so” automation and away from reinstating, task-creating uses of AI.

State-level AI laws and bills increasingly focus on governing “high-risk” uses, yet “high risk” is defined broadly enough to sweep in a wide range of workflows. This matters because compliance regimes tied to consequential decisions can unintentionally discourage platform-style deployment, that is, open-ended, workflow-integrated systems that enable new tasks, and instead reward narrow, predefined uses that are easier to classify and control. In Acemoglu and Restrepo’s terms, that tilt restrains task creation, the central offset to job loss from displacement.

Colorado’s SB 24-205 law and New York’s S1169A bill are useful examples. Under SB 24-205, an AI system is “high risk” when it makes, or is a substantial factor in making, a “consequential decision” about a consumer, where “substantial factor” includes AI-generated content, predictions, or recommendations that are used as a basis for such a decision, not just full automation. “Consequential decisions” span material, legal, or similarly significant effects in education, employment, financial and lending services, housing, healthcare, law, and government. AI would support screening, prioritization, and routing in these settings. New York’s AI Bill also targets high-risk AI systems used for consequential decisions and layers obligations on deployers, such as notice, appeal and human review, and an audit-and-accountability posture.

Policies like Colorado’s and New York’s can tilt firms toward the wrong margin of AI adoption. When compliance duties are triggered primarily by AI’s role in “consequential decisions,” firms have an incentive to concentrate AI deployment in domains that are unlikely to be classified as high risk, that is, often narrow, procedural, back-office tasks. That pattern is consistent with so-so automation: low-liability substitution that strips out discrete tasks and reduces hours or headcount without the broader redesign of products and workflows that expands output and generates new human-complementary roles. The result is modest or uncertain productivity gains coupled with a weaker reinstatement effect because AI is used mainly to replace tasks within existing job descriptions rather than to open a frontier of new tasks at scale.

Firms may also respond by sticking with conventional software to avoid regulatory burden. When a statute targets “AI systems,” companies can have an incentive to shift investment toward non-AI, or more lightly classified, rules-based, automation that delivers similar substitution in practice but sits outside the law’s definitions and compliance regime. The economic concern is not that such software is inherently bad; it is that it is typically engineered around predetermined tasks and fixed workflows rather than functioning as a flexible platform that workers can steer toward new, complementary tasks. Minimizing legal exposure can again shift the direction of adoption toward so-so automation: task replacement with weaker offsetting forces and a thinner path to broad-based wage and employment gains.

State lawmakers face a choice: adopting policies that steer firms toward socially productive AI adoption, incentivizing so-so automation, or simply letting markets decide with lighter-touch regulations. Acemoglu and Restrepo’s task-based framework is valuable because it clarifies what “good” looks like in economic terms. Socially beneficial automation is not defined by whether it uses AI, but by whether it (1) delivers meaningful productivity gains and (2) expands the set of tasks in which workers remain productively employed through new roles, redesigned workflows, and greater scale. So-so automation fails on both margins: it replaces labor in narrow tasks, yields limited productivity improvements, and creates too little new work to offset displacement.

What, then, can states do to tilt adoption toward productivity and task creation rather than so-so automation?

The first lever is incentives, which shape the direction of deployment without slowing innovation in red tape. States can use procurement and modernization budgets to make governments an early customer of worker-complementary tools, especially in areas where there are backlogs like benefits administration, fraud detection, records management, and permitting. Workforce training and transition pathways help workers move into new complementary tasks that AI creates. And states can design competitive grants, pilots and regulatory sandboxes that reward measurable productivity gains and demonstrable task expansion.

In parallel, states can adopt a narrow, abuse-focused regulatory posture: regulate outcomes and conduct, not the mere use of AI. That means using existing criminal and civil law where it already fits (fraud, identity theft, harassment), and adding targeted prohibitions only where genuinely new capabilities create new, clearly defined harms (e.g., non-consensual synthetic sexual material, synthetic child sexual abuse material, or tools designed to promote self-harm). The aim is not to micromanage “how” software is built or to require preemptive review of models, training data, or source code; it is to enforce accountability for concrete, demonstrable misconduct while keeping the compliance perimeter as small as possible so that platform-style, worker-augmenting deployments are not penalized for better capability. Done well, combining pro-innovation incentives with tightly scoped safeguards against the worst abuses is more likely to push firms toward the reinstating uses of AI that expand tasks and raise living standards.

Acemoglu and Restrepo’s framework is a warning to policymakers and advocates who try to “save jobs” by restricting AI use. If the rules steer firms toward narrow, compliance-safe substitution by discouraging (penalizing) platform-style uses that create new tasks, they can slow or disable offsets that make technological progress compatible with broad-based employment. These “protections” will make job loss more likely, not less.

1 See Daron Acemoglu and Simon Johnson. Power and Progress: Our 1000-Year Struggle Over Technology and Prosperity. New York: Public Affairs, 2023.

2 Daron Acemoglu and Pascual Restrepo, “Artificial Intelligence, Automation and Work,” NBER Working Paper 24196 (2018), https://doi.org/10.3386/w24196.

3 Acemoglu published a macroeconomic forecast in which he predicts modest productivity growth over the next ten years (0.55% – 0.71%). See Acemoglu, Daron. “The Simple Macroeconomics of AI.” NBER Working Paper: 32487. Accessed February 18, 2026. https://www.nber.org/papers/w32487.

4 As is chronicled in Acemoglu & Johnson, Power.

5 Feenstra, Robert C., Robert Inklaar and Marcel P. Timmer (2015), “The Next Generation of the Penn World Table.” American Economic Review, 105(10), 3150-3182. www.ggdc.net/pwt.

6 Daron Acemoglu and Pascual Restrepo, “The Wrong Kind of AI? Artificial Intelligence and the Future of Labor Demand,” NBER Working Paper 25682 (2019), https://doi.org/10.3386/w25682.

7 What follows is a stylized example to illustrate the reinstatement effect.

8 See, for example, OpenAI and ServiceNow’s recent 3-year deal. Belle Lin, “OpenAI and ServiceNow Strike Deal to Put AI Agents in Business Software,” The Wall Street Journal, January 20, 2026, https://www.wsj.com/articles/openai-and-servicenow-strike-deal-to-put-ai-agents-in-business-software-57d1da5c?re flink=desktopwebshare_permalink. See also Anthropic’s recent work automating back office work at Goldman Sachs. Hugh Son, “Goldman Sachs taps Anthropic’s Claude to automate accounting, compliance roles,” CNBC, February 6, 2026, https://www.cnbc.com/2026/02/06/anthropic-goldman-sachs-ai-model-accounting.html.

Stay Informed

Sign up to receive updates about our fight for policies at the state level that restore liberty through transparency and accountability in American governance.